Small Objectives, AI-Driven Architecture: How Abandoning Dark Lilly Built LillyQuest

Sometimes the only way to understand constraint-driven design is to ignore constraints entirely, realize you're drowning, and start over.

That's the story of Dark Lilly and LillyQuest.

Part 1: The Ambitious Mistake

In November 2026, I started Dark Lilly. A DOOM-like engine. 3D. OpenGL 4.1. Sector-based geometry with portal rendering. Room-over-room architecture. Full BSP tree implementation. A complete demo level in 10 weeks, working 1.5 hours per day

On paper, it made sense. I'd written about building engines through constraints. DOOM's architecture—sectors and portals—felt like the perfect constraint. Limited but powerful. Reductive but elegant.

For about two months, it worked. I moved incredibly fast:

- Window setup, Silk.NET integration, OpenGL context initialization

- Sprite batch renderer, font rendering, 8-way sprite animation system

- Camera system (FPS-style), material system (JSON-based), shader hot-reloading

- Sky rendering, basic geometry loading, texture mapping

I'd completed Milestone 1.5 entirely. The codebase was clean. The architecture was solid. I had proven I could build a real 3D engine.

Then I looked at Milestone 2: BSP trees. Portal rendering. Frustum culling. Stencil buffer techniques for room-over-room depth. Collision detection in 3D space.

And I froze.

Not because it was impossible. But because I realized something: I wasn't learning anymore. I was drowning.

Every decision now required choosing between five competing technical approaches. The cognitive load of holding "correct material system design" AND "portal rendering visibility" AND "BSP tree construction" AND "physics collision" in my head simultaneously was suffocating.

This is what over-constrained ambition looks like.

Part 2: The Realization

I'd written in Dark Lilly's documentation:

"When I can explain the loop on a whiteboard without squinting, I know I'm on the right track."

But I couldn't explain Dark Lilly's next milestone on a whiteboard. Not clearly. Not confidently.

The constraint—DOOM-like 3D engine—wasn't wrong. But it was too big for the time I had. Not impossible, but impossible and educational.

Here's the distinction: A good constraint forces you to think clearly. A bad constraint forces you to suffocate.

I made a decision: Step back.

Not abandon the dream of a 3D engine. Just... not now. Not when I don't understand the fundamentals of how engines even work.

The pivot was simple:

- Go 2D first

- Build a roguelike instead of a shooter

- Focus on tileset-based rendering instead of 3D geometry

- Make each objective small enough to explain and complete in weeks, not months

- Do it publicly (open-source) to create accountability

This wasn't failure. It was strategic retreat with learning applied.

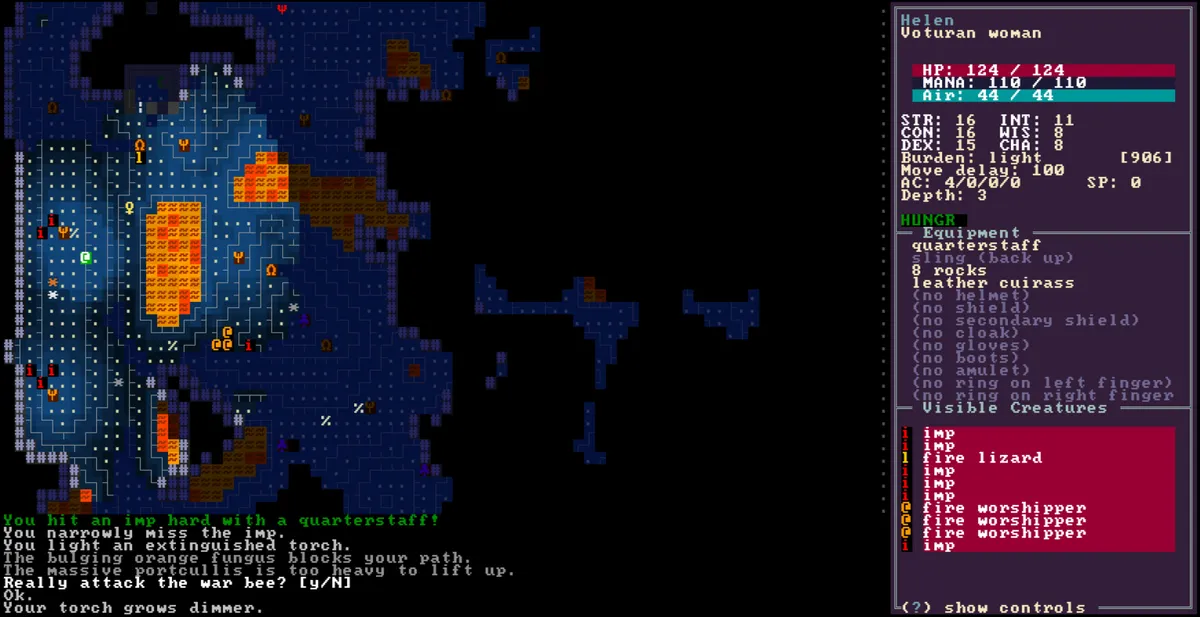

Sunlorn (Wander), one of the roguelikes that inspired me. The simplicity of the tileset, the clarity of the design, the focus on atmosphere and gameplay without visual clutter—this is what I want to achieve with LillyQuest.

Part 3: LillyQuest Foundation – The Right Constraints

LillyQuest inherits the architecture lessons from Dark Lilly's one successful week, but throws out the overwhelming scope.

Here's what "right constraints" look like:

3.1 Tileset-Based Instead of 3D Geometry

Dark Lilly's constraint: sectors and portals (complex geometry, portal visibility, stencil rendering).

LillyQuest's constraint: 32x32 tile chunks with procedural generation (still complex, but learnable).

Why? Because:

- Tilemap rendering is straightforward (SpriteBatch, different atlases)

- Chunk loading/unloading is a solved problem (load at distance, unload beyond horizon)

- Procedural generation is a separate problem you can solve incrementally

- No stencil buffer hacks needed

3.2 Modular Architecture (from Dark Lilly's partial success)

Dark Lilly taught me the value of clean layering:

DarkLilly.Core (Graphics primitives, asset managers)

DarkLilly.Engine (Systems, scenes, game objects)

DarkLilly.Maps (Map data structures)

DarkLilly.Scripting.Lua (Integration layer)

LillyQuest uses the same pattern but with refinement:

LillyQuest.Core (Graphics, assets, primitives)

LillyQuest.Engine (High-level: screens, UI, scenes, systems)

LillyQuest.Rendering (Separate rendering backend—learnable in isolation)

LillyQuest.Scripting.Lua (Lua integration)

LillyQuest.Services (Service layer—learned from Dark Lilly's missing gaps)

Each layer has one responsibility. If you need to understand how rendering works, you don't need to understand scene management. If you need to debug a scene transition, rendering is irrelevant.

Dark Lilly had this intention. LillyQuest enforces it.

3.3 UI System (The Unexpected Learning)

Dark Lilly's architecture had a gap: UI management. It didn't need much UI (just basic menu), so it stayed minimal.

LillyQuest realized: If you're building a roguelike, UI is core. Inventory, dialogue, status screens, focus management. This isn't decoration—it's architecture.

So LillyQuest built this first:

// UIScreenControl: Base for all UI elements

// Hierarchical, composable, anchoring-based positioning

UIScreenControl (base)

├── Position, Size, Anchor, ZIndex

├── Parent/Children (tree structure)

├── IsVisible, IsEnabled, IsFocusable

└── Mouse/Keyboard input dispatch

// UIRootScreen: Manages entire UI hierarchy

UIRootScreen

├── UIFocusManager (keyboard navigation)

├── Modal support (windows, dialogs)

└── Hit-testing (which control should receive input?)

// Concrete controls

UIButton, UIWindow, UIStackPanel, UILabel, UIScrollContent

Dark Lilly didn't prioritize this. LillyQuest learned: Start with the hardest thing. If you can build a solid UI system, everything else is easier.

Part 4: Three Objectives That Define the Engine

The pivot forced a new question: How do you build a general-purpose engine while learning?

Answer: Through small objectives. Not arbitrary small, but small enough to explain, big enough to teach.

Objective 1: Foundation (UI + Scene Management)

Status: Complete

What it teaches:

- How does a roguelike present information? (UI hierarchy)

- How do scenes transition? (Scene manager state machine)

- How does the engine manage entity lifecycle? (Global vs. scene-specific entities)

This was already done in the commits before the pivot. The recent work (unify focus and hit-test, use base children in debug control tree) shows the micro-optimizations that emerge when you actually use the system.

The lesson: A good foundation forces the next objective to be clear.

Objective 2: Procedural Tilemap + Infinite Chunk Loading

Status: Planning

What it teaches:

- How do you generate reproducible worlds? (Seeding, noise functions)

- How do you handle memory efficiently? (Chunk loading at distance, unloading beyond horizon)

- What does "procedural" actually mean at the implementation level?

32x32 chunks. Simple noise (Perlin/Simplex). Serialization for loaded chunks. That's it. No biome variation yet. No dungeon generation. Just: "Can I generate and load a world?"

The constraint: The scene manager you built in Objective 1 now tells you exactly how to integrate this. Chunks are entities. They load into the scene. They unload when distance > threshold. Done.

Objective 3: Room Generation (Dungeons + Palaces with Levels)

Status: Design phase

What it teaches:

- How do you represent hierarchical structures? (Rooms inside chunks, levels inside rooms)

- How do you handle non-Euclidean spatial layout? (A room's interior doesn't match its exterior size)

- What constraints does "roguelike design" impose on architecture?

This is where it gets interesting. A room can be:

- A dungeon (multiple levels, narrow corridors)

- A palace (multiple rooms, connected)

- A cave system (organic shapes)

Each has different generation algorithms. But the interface is the same: "Generate a room, return its entities and connections."

The constraint: Objective 2's chunk loading system tells you how big a room can be. Objective 1's entity system tells you how to place NPCs inside. Constraints propagate.

Objective 4: Biome System (The Unknown)

Status: Research

Here's the honest part: I don't know what biomes should do yet.

Common assumptions:

- Biomes affect tileset appearance (grass vs. stone vs. sand)

- Biomes affect entity spawning (desert has different creatures than forest)

- Biomes affect procedural generation parameters (cave density, water frequency)

But I don't know. This is where the partnership with AI agents becomes crucial.

Part 5: The AI Partnership Pattern

Here's something I didn't expect: Explaining a problem to an AI agent forces architectural clarity.

When I write:

"I want to generate 32x32 chunks of tilemap using noise, seed the randomness so the same chunk always generates identically, and load/unload chunks based on player distance. How should I structure this?"

The AI responds with patterns I hadn't considered:

- Chunk serialization (store generated chunks or re-generate on demand?)

- Memory layout (pre-allocate chunk slots or dynamic allocation?)

- Seeding strategy (single global seed or per-chunk seeds?)

Each answer reveals the next architecture question.

This isn't me delegating design to the AI. It's me using the explanation itself as the design process. The AI is just the mirror.

Over time, a pattern emerged:

1. Define the objective clearly

"Build a procedural tilemap system with 32x32 chunks"

2. Explain it to an AI agent

"Here's my scene manager. Here's my entity system.

How should chunks fit in? What's the interface?"

3. Implement the suggested pattern

(This is the actual learning—building it teaches you more than listening)

4. Use the result

"Hmm, chunk loading is slow because X. What's better?"

5. Next objective emerges naturally

"I need biome variety. How does that affect generation?"

This cycle is fast. Not because the AI writes code (it does, sometimes), but because articulating the problem IS the problem-solving.

Part 6: The Lesson

Here's what Dark Lilly taught me that LillyQuest applies:

6.1 Constraints Aren't Optional

Dark Lilly's constraint (3D DOOM-like engine) was intellectually interesting. But it was too big.

LillyQuest's constraints are:

- Tileset-based 2D rendering (not free-form 3D)

- Roguelike game (not generic engine)

- 32x32 chunks (not infinite-detail procedural)

- Small, sequential objectives (not 10 weeks of parallel complexity)

These constraints don't limit what I can build. They clarify what I should build.

6.2 The Whiteboard Test Still Applies

From my original Dark Lilly article: "When I can explain the loop on a whiteboard without squinting, I know I'm on the right track."

LillyQuest's loop is simple:

1. Scene manager loads/unloads chunks based on player position

2. Chunks contain entities (tiles, NPCs, items)

3. Entity system updates/renders them

4. UI shows player state

5. Repeat

That's whiteboard-explainable. Dark Lilly's loop wasn't (once you add BSP trees and portal visibility).

6.3 Small Objectives Aren't Laziness

They're architectural clarity through incremental discovery.

Each objective is sized such that:

- It teaches something non-obvious (not busy work)

- It can be completed and integrated in days (with small steps and not weeks)

- It creates clear constraints for the next objective (not random)

- It can be explained to an AI agent in a single conversation (forcing you to understand it first)

6.4 Failing Teaches More Than Planning

Dark Lilly didn't fail. It taught me what happens when ambition exceeds available cognitive load.

LillyQuest is the application of that learning. Not in the design, but in the discipline: Small wins accumulate. Momentum compounds. Shipping feels good.

Part 7: What Comes Next

I'm currently working on Objective 2: Procedural Tilemap.

The goal: A 32x32 chunk generates and loads in < 1 frame. Multiple chunks coexist in memory. The scene manager handles loading/unloading seamlessly.

This is a learnable problem. Not trivial (procedural generation has pitfalls), but scoped.

Once that works, Objective 3 (Room Generation) will reveal itself. "How do I fit rooms inside chunks? How do I handle non-Euclidean interiors?"

And Objective 4 (Biomes) won't be a mystery anymore. It'll be: "Given that I can generate chunks and rooms, what parameters should vary per biome?"

Closing: From Drowning to Clarity

The meta-lesson of LillyQuest isn't about roguelikes. It's about choosing the right constraints.

Dark Lilly showed me what happens when you pick constraints that are intellectually interesting but practically overwhelming.

LillyQuest shows what happens when you pick constraints that are:

- Specific enough to guide architecture

- Small enough to complete incrementally

- Learnable through explanation and implementation

- Public enough to create accountability

The engine I'm building won't be as sophisticated as Dark Lilly's would have been. But it'll be finished. And along the way, I'll understand exactly how it works.

And maybe that's the real constraint: Understand what you build.

This article is part of a series on building LillyQuest. Next: Objective 2 - Procedural Tilemap Generation. The code is open-source: github.com/tgiachi/LillyQuest